Google is rapidly redefining how large-scale AI infrastructure is built and deployed, with the majority of its workloads now running on proprietary chips. As Qualtrim noted, the latest data shows a decisive move toward internalization - positioning the company not just as a technology leader, but as the largest consumer of its own AI hardware.

The Scale of Google's Internal TPU Shift

The key data point is striking: over 90% of Google's internal AI workloads are now powered by its Tensor Processing Units. That level of concentration signals a near-complete transition away from reliance on external chips for internal operations.

This is not a gradual trend - it reflects a fully developed structure where in-house infrastructure dominates. The scale of deployment implies that Google's TPU ecosystem is no longer supplementary but central to its AI strategy.

NVDA Stock Faces New Threat as Google's TPU Shift and Buffett's $5.1B Bet Reshape AI Chips explored the competitive implications of this transition directly, showing how Google's internalization strategy is already reshaping the external chip market that Nvidia has dominated.

From External Dependence to Full Google TPU Control

The structure outlined in the data shows a clear transformation in how compute resources are managed. Instead of distributing workloads across multiple hardware providers, Google has consolidated execution within its own stack:

- More than 90% of AI workloads running on TPUs

- Internal chip usage becoming the primary compute layer

- A system designed around proprietary hardware rather than external supply

The tweet also highlights a secondary effect: billions of dollars that would otherwise flow to external suppliers are now retained within Google's ecosystem. That capital retention is as strategically significant as the technical architecture itself.

A Structural Redefinition of AI Infrastructure

What stands out is the degree of control. By running the vast majority of workloads internally, Google effectively defines its own compute environment - from chip design to deployment. That level of vertical integration creates a self-contained system that reduces dependency on external supply chains and pricing dynamics simultaneously.

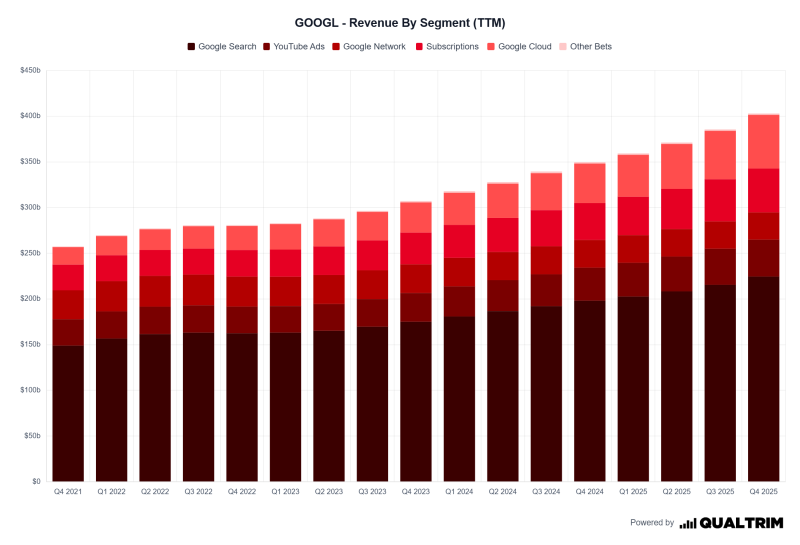

AI Infrastructure Spending Soars as Big Tech Pushes Toward $125B in Capex places Google's TPU strategy within the broader hyperscaler capex race, showing how internal chip development is becoming a core component of the infrastructure investment thesis rather than a niche efficiency play. Google's Vast Reach: Can $GOOG Keep Growing? adds the business model dimension, framing TPU dominance as one piece of a larger platform strategy where controlling the compute layer reinforces Google's position across search, cloud, and AI services simultaneously.

Google's model demonstrates that large-scale AI compute internalization is already operating at massive scale - and the 90% threshold sets a new benchmark that other hyperscalers will inevitably be measured against.

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi

Eseandre Mordi