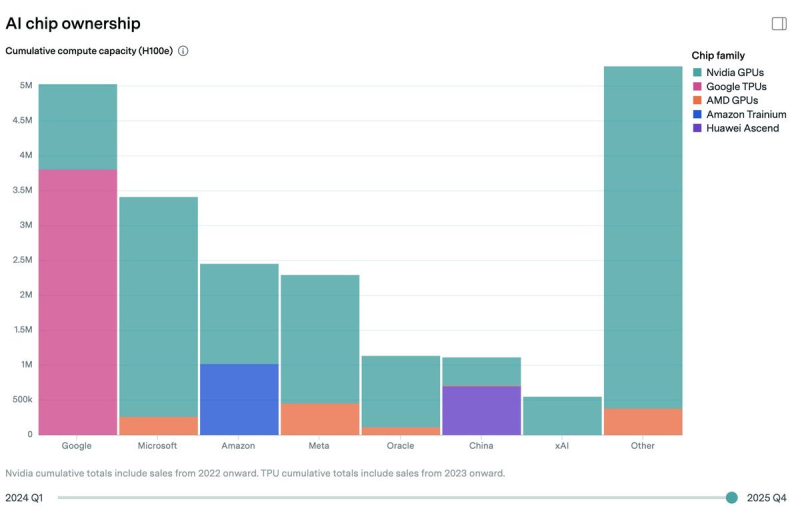

As Oguz Erkan argued, roughly 40% of Amazon's AI compute capacity now comes from custom chips, with the bulk of inference workloads running on Trainium. The chart supports the broader point that Amazon is no longer just a buyer of external accelerators - it already shows a sizable Amazon bar with a distinct Trainium segment, while only Google appears more deeply integrated with proprietary silicon through TPUs.

Where Amazon's Chip Mix Starts to Matter

The chart is less about headline size than composition. Nvidia GPUs still dominate much of the industry's cumulative compute capacity, but Amazon's stack stands out because a meaningful portion is now assigned to Trainium rather than third-party hardware alone. That is the key strategic signal: AWS is building a hybrid model, keeping access to Nvidia while expanding its own silicon footprint.

That matters more as AI usage matures. Training remains compute-intensive, but inference is where scale economics start to bite. If Amazon can serve a larger share of real-world AI traffic on its own chips, the payoff is not just supply flexibility - it is potentially better cost control across the entire AWS platform.

Google Leads in Integration, but AMZN Brings Volume

Google is the obvious comparison point in the chart. Its bar shows the heaviest dependence on custom TPUs, making it the clearest peer in vertically integrated AI infrastructure. But Amazon's case is different - AWS operates on a larger commercial cloud base, which gives even partial chip internalization more financial weight.

The competitive question is not simply who built the better chip. It is who can translate chip ownership into real cloud economics at scale. AMZN Extends Lead in Cloud Revenue as Google Accelerates Growth reinforces AWS's scale advantage in the broader cloud race - making Amazon's growing Trainium footprint more consequential than it would be at a smaller platform.

The Inference Shift Could Change the AMZN Margin Story

The core claim is that efficiency should improve as workloads move from training to inference - and that is the most investable part of the argument. Inference is recurring, operational, and highly sensitive to cost per computation. A company that can push more of that traffic onto internally optimized silicon has a clearer path to defending margins while still expanding capacity.

Inference is recurring, operational, and highly sensitive to cost per computation - a company pushing more of that traffic onto its own silicon has a clearer path to margin defense while expanding capacity.

Amazon Pushes Trainium4 Chip Development With Nvidia NVLink as Costs Rise 30-40% Annually frames custom hardware as both a cost response and a strategic infrastructure layer - showing that the Trainium roadmap is being driven by external cost pressure as much as internal ambition.

Anthropic Adds a Demand-Side AMZN Catalyst

The bullish case does not rest on chip architecture alone. It also leans on customer momentum - specifically Anthropic, described as AWS's preferred training and inference partner, with revenue reportedly tripling in the first quarter. If that demand trajectory holds, Amazon is not just building chips for optionality; it is building them for a rapidly scaling AI tenant base.

AMZN Stock News: Anthropic Expands AI Partnership places Trainium deployment at the center of Amazon's long-term AI infrastructure strategy - reinforcing that the chip investment and the customer relationship are being developed in parallel rather than independently.

Usman Salis

Usman Salis

Usman Salis

Usman Salis